If you’ve ever found yourself needing to search large data sets in PowerShell, you’ve probably become frustrated with how slow For-Each or Where-Object functions are to search through the data. Maybe you need to search through thirty thousand mailboxes in your Office 365 tenant or a hundred thousand user accounts in Active Directory. Using the For-Each or Where-Object against that large of a data set can take forever, especially on PowerShell 5.1

So, what do we do about this? The answer: Hash Tables! It took me way too long to figure out just how great hash tables are for this purpose. I’d watch my script take forty-five minutes or an hour to search, sort, and order my large datasets. But by using Hash Tables, I cut my script run time down to just a few minutes.

Table of Contents

Pre-Requisites

While PowerShell 7.1 does add speed improvements in searching through arrays or data sets with For-Each and Where-Object, in this blog we’ll be using version 5.1 on a Windows 10 machine version 20H2. Any Windows 10 or Server OS capable of running PowerShell 5.1 will also work fine.

Getting Started

A hash table is a type of data structure that stores its information in two pairs: the “key” and the “value”. This key/value combination is what makes hash tables powerful and very fast at accessing data. The “key” should be unique and will be what you use primarily to search in the table. The “value” can be anything that is associated with the key. I like to think of these as fancy lookup tables. The key is what I want to look up, and the value is what is returned.

To create a new, empty hash table, enter the following:

$mailboxes = @{}You’ll notice that these use curly braces {} as opposed to parentheses () that are used by arrays. Now we need to populate the Hash Table. You can create a very simple one manually by typing “Key”=”Value” on each line:

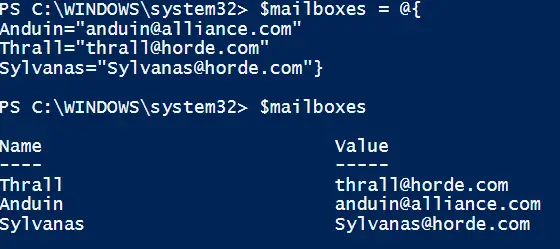

$mailboxes = @{

Anduin="anduin@alliance.com"

Thrall="thrall@horde.com"

Sylvanas="Sylvanas@horde.com"}Or you can do it all on one line separated by semi colons:

$mailboxes = @{Anduin="anduin@alliance.com";Thrall="thrall@horde.com";Sylvanas="Sylvanas@horde.com"}

You can enter the $mailboxes variable into the command line and it will output each key/value pair:

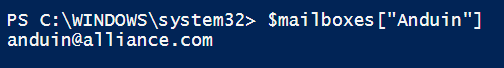

So let’s say I want to “lookup” Anduin’s email because I forgot what it was or simply that I need this value in my script. I can pass that into brackets in the hash table.

$mailboxes["Anduin"]And it will return the value:

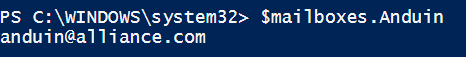

Since each key is a property of the hash table, you can also do this:

$mailboxes.Anduin

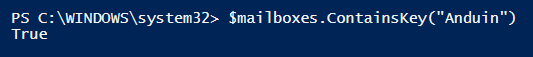

Additionally, you can also use the ContainsKey function to see if a key exists in the hashtable. It will return a Boolean value, true or false, depending on the condition.

$mailboxes.ContainsKey("Anduin")

OK now that we’ve got some of the basics out of the way, let’s move on using this with large datasets.

Populate your Hash Table

I was on a project for a very large customer where I needed to query and make calls against Office 365 for a large number of users. This number would increase over time and eventually get to be over 200k! To simplify this example, I had two datasets:

- One list of users that were getting migrated. Let’s call them “wave” users. These are batches of 5k, 10k, or 15k. We have basic user info including First Name, Last Name, and Email Address.

- Mailbox data stored in O365

I need to loop through each user in my list and make calls to O365 to get mailbox information. Assuming my variable for my wave users is stored in $users, one way I could do this would simply be:

foreach ($user in $users){

Get-Mailbox -Identity $user.EmailAddress

}This would work fine for several hundred or even a thousand users. But once you start getting larger amounts of users, this loop starts to take a long time. And what happens if this gets interrupted or you need to do a lot of testing? Waiting 1-2 hours for a call to complete each time you want to test is not my cup of tea.

I needed a better way.

Instead of making many individual O365 calls for each mailbox, I can do one “bulk” call that gets literally every mailbox. Like this:

$mailboxes = Get-mailbox -resultsize unlimited -Filter "IsMailboxEnabled -eq 'True'"While this command still can take some time, depending on the size of the environment, it is way more efficient. I also can export it to a csv so that I have an “offline” copy in case I need to re-import the data set. Let’s turn this into a hash table for incredibly fast searching and data manipulation.

To create a hash table with a large data set, we can simply use a for-each loop. I will loop through each mailbox in my $mailboxes variable and then set the “key” equal to email (the stuff in the brackets) and the “value” equal to all of the mailbox properties.

$mail = @{} #This creates an empty hash table

foreach ($item in $mailboxes){

#this loops through each item in $mailboxes

$mail[$item.EmailAddress] = $item #this sets email address as the key, and $item as the value (all properties of the mailbox)

}I chose EmailAddress as the primary key as they are unique for each user and that is also what I have in my wave users variable $users. This allows me to search or lookup values in the hash table by email addresses and it will return every property of the mailbox.

It’s pretty incredible how fast this is to create the hash table. For that customer with over 200k user mailboxes, it only took 845 milliseconds to create it!

Now that we have ALL mailboxes in a nifty hash table, we want to find the mailboxes of just our wave users (5k). I can do another loop and use each user’s email address to “lookup” their mailbox data from the mailboxes hash table.

$users = import-csv C:\temp\wave1users.csv

$wave_users_Mailbox = foreach ($user in $users) {

if ($mail[$user.EmailAddress]) { #if the email address returns true when looking up in the hash table

$mail[$user.EmailAddress] #then output the mailbox data and this will get populated into the $wave_users_Mailbox variable

}

}Now we have all of the mailbox data for our wave users. One more thing though.

I mentioned in the intro that this method is also way faster when searching through the data set for one user using Where-Object. Taking that real-world customer example I mentioned earlier (200k mailbox dataset), searching for my email address with Where-Object took around 2.5 seconds. Using the hash table though, it only took .0015 seconds!

That is a significant speed improvement!

Conclusion

Using hash tables in PowerShell is a powerful and efficient way of dealing with large data sets. They provide a fast way to search and sort and will shave off a significant amount of time in your scripts. Try it out!

Thanks for the solution. The printed code references the hash array as “$mailboxes” but it should be “$mail”. 🙂

Good catch! I’ve updated the blog.